Inspired by my previous interview with Shanahan, I felt the need to dive deeper into the characteristics of intelligence. We know that human intelligence is dependant on embodiment and environment. If we were to develop an AGI (Artificial General Intelligence), a computational form of intelligence as intelligent as humans, it too would need this dynamic in play. Basically, an AGI agent would need to be stimulated by a physical or virtual environment with some sort of actuation.

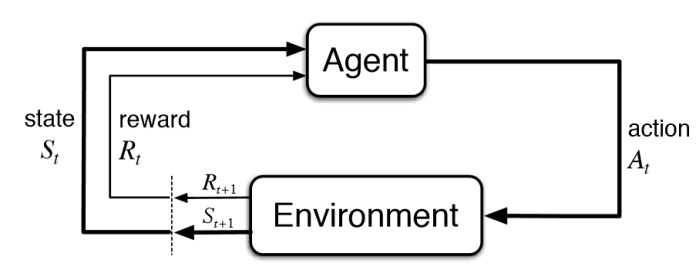

The agent exerts an action within an environment, and the environment influences the agent through stimulation and experiences. The agent would have also have other inputs and characteristics such as ability, goals and knowledge dictating its behaviours and actions.

This sort of action/reaction is seen in reinforcement learning (RL), which is a form of machine learning that uses a reward system to optimise movements or actions. Similarly, an agent acts on the environment, and the environment causes an update of the agents current state and reward incentive. This feedback loop allows the agent to learn as it acts in order to maximise its reward or goal function.

Professor Shanahan had referred me to his latest research paper titled “Artificial Intelligence and the Common Sense of Animals“. The paper argues the development of common sense (defined as intertwined set of fundamental principle and abstract concepts), as possessed by many animals, is based on the basic understanding of concepts of objects, space and causality. This was done through deep reinforcement learning methods within 3D environments.

Some interesting points were made in the paper that resonated with my research. One idea was the notion of relativity when understanding concepts. If a machine (same applies for any living being) was to interpret an object O that undergoes an action A, it would have to have a basic understanding what an object is, what actions are, what sort of motion dictates the specific action A. In effect, the relationship and interaction between the object O and action A requires the understanding of causality, space, motion and the objects that undergo these transformations. It’s all relative.

Unlike humans or animals, motivating an AI to do tasks on its own volition, through basic needs and requirements, doesn’t apply. Machines are typically programmed with predefined tasks from their inception. Putting a machine in a box, and not telling it do anything about the box or escaping, could have it not attempting to do anything. If it was in a typical RL setup, where through trial and error the agent would be able to maximise its reward over time with a final goal to escape, the potential idling or erratic behaviour would be avoided.

With the current state of intelligent AIs, these systems are not data efficient; they need more training examples to execute the same task a human would. They are brittle and are affected by every small change in their environment, and are also limited to tasks that are specific and inflexible. Human intelligence and common sense is built on the idea we can transfer knowledge over various tasks.

Most AI systems are programmed with certain tasks or final goals. Animals and humans don’t follow this pattern. Our existence could be defined as one long task. This could be achieved by creating RL systems where we teach a curriculum of smaller and easier tasks to eventually more complex tasks, all within a single extended framework of existence.

Back to the idea of an agent interpreting an object, action and environment. For an agent to understand that an object is an object, the agent would need to afford a sense of occupancy to the object; it takes up and translates through 3D physical space, and persists through time (also known as object permanence). The object can be moved and investigated; looked through, under or behind to reveal other entities.

Modern RL systems can learn complex tasks in 3D space, but this done at a pixel-level, instead of developing a prior knowledge (common sense) of the environment and spaces these 3D objects occupy. This common sense is purely physics based. Other forms, like social and psychological concepts, would be another problem in its entirety. There are many disparate and simultaneous levels to the construction of an AGI. With the correct environment, frameworks and curriculums, we could potentially fix this common sense problem, and thereafter we would be better equipped to deal with task of language, where things tend to be even more abstract and complex.